Table of Contents

Pixee Enterprise Server Documentation¶

Welcome to the Pixee Enterprise Server documentation. This guide will help you install, configure, and operate Pixee Enterprise Server in your environment.

Overview¶

Pixee Enterprise Server is a self-hosted solution that brings the power of automated code improvements directly to your infrastructure. It analyzes your codebase, identifies potential improvements, and automatically generates pull requests with fixes and enhancements.

Getting Help¶

If you encounter issues during installation or operation:

- Check the FAQ section for common solutions

- Review the troubleshooting sections in the installation guides

- Contact our support team with detailed information about your environment and the issue

Installation

Installation Overview¶

Pixee Enterprise Server is a self-hosted solution that brings pixee.ai into a customer's infrastructure.

Installation Methods¶

There are currently two methods available for installing Pixee Enterprise Server:

This option provides the most streamlined installation, configuration, update, and support experience. This is the recommended method of installation for Pixee Enterprise Server as it provides a user-friendly interface for installation, configuration and enhanced troubleshooting capabilities.

This option allows users to deploy Pixee Enterprise Server into a managed kubernetes cluster.

Prerequisites¶

Before installing Pixee Enterprise Server, you'll need to provision the necessary infrastructure.

Common Requirements¶

Database¶

For trial installations you can use the embedded database and skip this section. For production environments, we recommend creating an external database:

- PostgreSQL 17.4+

- 10Gb+ available disk space

- Network connectivity between Pixee Enterprise Server Kubernetes cluster and database

- Create database named

pixee_platform(or any name you choose) - Create user with permissions to

pixee_platformdatabase

External Object Store¶

You can configure Pixee Enterprise Server to use an external object store. If you prefer to use the in-cluster, embedded object store, you may skip this section.

Requirements¶

The following are requirements of an external object store compatible with Pixee Enterprise Server:

- The object store and the Kubernetes cluster are able to communicate over the network

- The object store exposes a S3 compatible API

- A bucket has been created for use as the

pixee-analysis-inputbucket

AI Providers¶

Advanced AI capabilities are required. Please review the AI Providers documentation for more information for supported AI providers in Pixee Enterprise Server.

Infrastructure Requirements¶

Create a VM with the following specifications:

- Linux distro: Ubuntu 24.04+ (recommended) or Enterprise Linux 9 (RHEL 9, Rocky Linux, AlmaLinux)

systemdinstalled- For Enterprise Linux 9: SELinux must be in permissive mode (enforcing mode is not supported)

- Allows traffic to egress to the internet (more details here)

- Allows HTTPS traffic to ingress to port 443

- Allows HTTPS traffic to ingress to port 30000 (for embedded cluster admin console)

- 8 vCPU, 32 GB RAM

- 100GB+ disk with <10ms write latency (i.e. SSD/NVME)

DNS Configuration: Create the appropriate DNS records so that a domain name resolves to your provisioned virtual machine.

TLS Certificate: To encrypt traffic to Pixee Enterprise Server, you'll need to generate/acquire a TLS certificate for use with your selected domain name. With the Embedded Cluster installation method, Pixee Enterprise Server can automate the TLS certificate request using LetsEncrypt or self-signed certificates as part of the configuration process.

Select or create a Kubernetes cluster with the following available to Pixee Enterprise Server:

- 8+ vCPU

- 32+ GB RAM

- 100GB+ disk with <10ms write latency (i.e. SSD/NVME)

- Allow outgoing HTTP traffic to the internet (more details here)

- Allow incoming HTTPS traffic to port 443

- (optional) ingress controller installed and configured

DNS Configuration: Create the appropriate DNS records so that a domain name resolves to your Kubernetes cluster.

TLS Certificate: To encrypt traffic to Pixee Enterprise Server, you'll need to generate/acquire a TLS certificate for use with your selected domain name. With Helm, you have multiple options for TLS certificate management: using cert-manager to automatically provision TLS certificates, using pre-existing TLS certificates as Kubernetes secrets, or terminating TLS outside the cluster (i.e., via a load balancer).

Additional Helm Requirements:

- Kubectl installed and configured to access the target cluster

- Helm CLI installed (version >3.15)

- preflight and support-bundle plugins from troubleshoot.sh installed in the target cluster

- Access to image registry (images.pixee.ai) from Kubernetes cluster

Tip

If you need to pull images from an internal registry, you will need to update your values.yaml to override the registry and pullSecrets values for all images listed in the reference section identified by the patterns **.image.registry and **.image.pullSecrets. In addition, if your registry requires authentication you will need to create a dockerconfigjson type secret to authenticate with your internal registry in the pullSecret value for each image.

Installation Instructions¶

Installation¶

To install using Embedded Cluster follow:

-

From your virtual machine, download the Pixee installer:

curl -f "https://distribution.pixee.ai/embedded/pixee/<release channel>" -H "Authorization: <your license ID>" -o pixee.tgz -

Extract the Pixee installer:

tar -xvzf pixee.tgz -

Run the Pixee installer:

sudo ./pixee install --license license.yamlInfo

The directory used for data storage can be changed by passing the --data-dir

-

You will be prompted to set an admin password, this password will grant access to the admin console later

-

When the installer completes, visit the admin console url in your browser:

https://<domain name or vm ip>:30000 - You may receive a self-signed certificate warning from your browser, this is expected

-

If you have a domain name and TLS certificate available you can configure the admin console to use them by following the prompts, or you can

-

The admin console will then load the configuration page. You will be directed through a workflow that will step you through configuring Pixee Enterprise Server.

To install using Helm Deployment follow:

-

Authenticate against the Pixee Helm Registry:

helm registry login registry.pixee.ai --username <your email address> --password <your license key> -

Preflight checks - If there are any known issues that would prevent successful installation, the preflight checks will report them. To run the preflight checks:

If there are no issues, or you are able to address all reported issues, continue with the installation using helm.helm template oci://registry.pixee.ai/pixee/<release channel>/pixee-enterprise-server --values values.yaml | kubectl preflight - -

Helm install - Execute helm against the Kubernetes cluster to install, be sure to replace your release channel below (likely

stableorunstable):helm upgrade --install pixee-enterprise-server oci://registry.pixee.ai/pixee/<release channel>/pixee-enterprise-server -f values.yaml -n pixee-enterprise-server --create-namespace

Tip

Be sure to replace <release channel> with your actual assigned channel, this is likely stable or unstable

Basic Configuration¶

After installation, you'll need to configure the basic settings for Pixee Enterprise Server. These settings include the domain name, protocol, and ingress configuration.

Configuration¶

Configuration is done through the admin console configuration page available after installation at:

https://<domain name or ip address>:30000

When you load the admin console page you will be prompted to enter your admin password. The first time configuring after installation you will be directed through a workflow that will step you through configuring Pixee Enterprise Server.

Basic Settings¶

The settings under the Basic Settings section all require your input. Here you will find the required settings for the Pixee Enterprise Server domain name, TLS options, and AI model provider settings. Make sure you review all of these settings for completeness and accuracy.

Domain¶

Enter the domain name you have assigned to your Pixee Enterprise Server. If you have not assigned a domain name you can enter the public IP address of your Pixee Enterprise server instead but this will limit your TLS options.

Protocol¶

Select the protocol that will be used for your Pixee Enterprise Server. If you select HTTP, ingress traffic to your Pixee Enterprise Server will be un-encrypted. The HTTP option is for quick testing configurations or when you have an external system like an App Gateway or Load Balancer that is terminating TLS instead of Pixee Enterprise Server. If you have an external system (i.e. App Gateway, Load Balancer, etc.) acting as a reverse proxy to your Pixee Enterprise Server, make sure you provide reverse proxy settings in the Advanced Settings section. If you select HTTPS, ingress traffic to your Pixee Enterprise Server will be encrypted, and you will be prompted to configure TLS.

Authentication¶

Pixee Enterprise Server currently supports the following OIDC providers:

Select the OIDC provider you want to use for authentication. If you select Embedded Provider, Pixee Enterprise Server will use its built-in OIDC provider. For other providers, you will need to provide the necessary configuration details such as client ID, client secret, and issuer URL.

See Authentication for more information on specific provider configuration.

AI Providers¶

To use OpenAI directly, select OpenAI and enter your OpenAI API key.

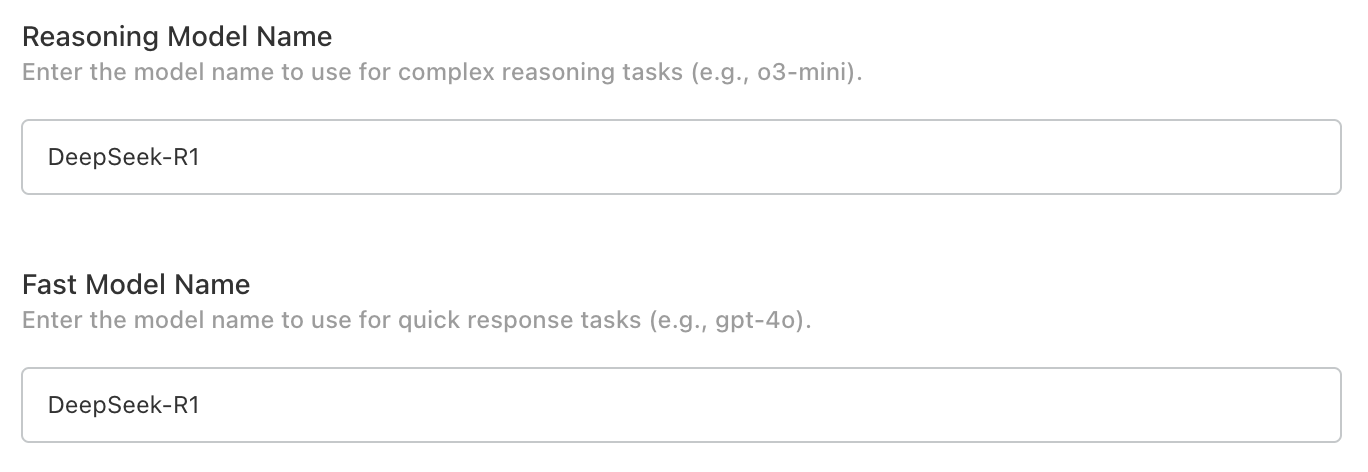

To use Azure OpenAI, select Azure OpenAI and enter your Azure OpenAI resource endpoint, key, and model deployment names for o3-mini.

For Databricks please see: Databricks AI Serving Endpoints.

Create a values.yaml file and configure the following basic settings:

Domain¶

Set the URL where your Pixee Enterprise Server will be accessible (if no domain name is available, use an external IP address):

global:

pixee:

domain: "<your pixee enterprise server domain name>"

Protocol¶

Set the HTTP protocol (http or https) used to access your Pixee Enterprise Server:

global:

pixee:

protocol: "https"

Info

If you are using TLS to secure traffic to your Pixee Enterprise Server set this to https, even if you terminate TLS outside your cluster.

Ingress¶

If you are using an ingress controller, you can enable and configure the Pixee Enterprise Server ingress resource as follows:

platform:

proxy:

# enable proxy configuration with ingress to allow headers from the ingress controller

enabled: true

ingress:

enabled: true

className: "<your ingress controller class name (i.e. nginx, gce, etc)"

hosts:

- host: "<your pixee enterprise server domain name>"

paths:

- path: "/"

pathType: "Prefix"

# If you are securing your Pixee Enterprise Server with TLS via ingress, set the following

tls:

- hosts:

- "<your pixee enterprise server domain name>"

secretName: "<your tls certificate secret name>"

AI Model Provider - OpenAI¶

To configure access to the OpenAI API, set the following:

global:

pixee:

ai:

openai:

key: "<your OpenAI API key>"

# Optional: Custom OpenAI API base URL (e.g., for Azure OpenAI compatible endpoints)

# baseUrl: "https://example.cloud.databricks.com/serving-endpoints"

# -- Use an existing secret instead of creating one

existingSecret: ""

secretKeys:

# -- Secret key containing the api key

apiKey: "key"

AI Model Provider - Azure OpenAI¶

To configure access to Azure OpenAI, set the following:

global:

pixee:

ai:

azure:

enabled: true

key: "<your Azure OpenAI key>"

# -- Use an existing secret instead of creating one

existingSecret: ""

secretKeys:

# -- Secret key containing the api key

apiKey: "key"

endpoint: "<your Azure OpenAI endpoint>"

deployments:

o3-mini: "<your model deployment name for o3-mini>"

Databricks AI Serving Endpoints¶

Pixee Enterprise Server can integrate with Databricks AI. See Databricks AI for more information.

To configure Databricks AI serving endpoints, set the following:

global:

pixee:

ai:

openai:

enabled: true

key: "<your Databricks PAT or API key>"

baseUrl: "https://<your-databricks-workspace>.cloud.databricks.com/serving-endpoints"

# -- Use an existing secret instead of creating one

existingSecret: ""

secretKeys:

# -- Secret key containing the api key

apiKey: "key"

Info

The baseUrl should point to your Databricks workspace serving endpoints. Ensure the required model endpoint (o3-mini) is deployed and accessible.

Authentication¶

Pixee Enterprise Server supports multiple authentication providers. This section covers configuration for all supported authentication methods.

Info

Support for OIDC compatible identity providers is in active development, contact support@pixee.ai to request additional support.

Embedded Identity Provider (Authentik)¶

Pixee Enterprise Server includes Authentik as an embedded identity provider. This provides a full-featured identity management solution without requiring an external OIDC provider.

Features¶

- User management with web-based admin interface

- Support for local users and passwords

- Federation with external identity providers (Google Workspace, Oracle, etc.)

- Self-service password change

- Session management

- Pixee-branded login experience

Email / SMTP Support

To enable email features such as self-service password reset, email verification, and notification emails, configure the SMTP / Email Settings section in the admin console. An SMTP server (e.g., your organization's mail relay, Gmail, SendGrid) is required. Without SMTP configured, password resets must be performed by an administrator through the Authentik admin interface or by using the recovery key command described below.

Configuration¶

To enable Authentik in Embedded Cluster deployments:

- Navigate to the admin console, select the

Configtab, then go to theBasic Settingssection - Under

Authentication mode, select "Authentik" - Save and deploy the configuration

After deployment, Authentik will automatically initialize with the Pixee OIDC application pre-configured. The default admin credentials are displayed in the config page.

To enable Authentik in Helm deployments, set the following in your values.yaml:

authentik:

enabled: true

authentik:

secret_key: "<generate a secure random string - must not change after install>"

bootstrap:

password: "<initial admin password for akadmin user>"

postgresql:

host: "<postgresql-host>"

name: "authentik"

user: "authentik"

password: "<database-password>"

redis:

host: "<redis-host>"

port: 6379

# Inject the OIDC client secret into the Authentik worker so the blueprint can

# read it via !Env. References the same secret used by the platform.

worker:

env:

- name: PIXEE_OIDC_CLIENT_SECRET

valueFrom:

secretKeyRef:

name: oidc-client-secrets

key: secret

global:

pixee:

access:

enabled: true

oidc:

client:

id: "pixee"

secret: "<generate a secure random string>"

redirectUri: "https://<your-domain>/api/auth/callback"

Initial Setup¶

After deploying with Authentik enabled:

-

Access the admin interface by navigating to:

https://<your-domain>/authentik/Log in with username

akadminand the password shown in the admin console config page (your license ID). After logging in, click the Admin interface button to access user management. -

Change the admin password (recommended) - Navigate to Directory > Users, select

akadmin, and update the password. This change will persist across upgrades. -

Create additional users as needed through the Authentik admin interface

-

Find the admin password in your

values.yamlunderauthentik.authentik.bootstrap_password -

Access the admin interface by navigating to:

https://<your-domain>/authentik/Log in with username

akadminand the configured password, then click the Admin interface button. -

Create additional users as needed through the Authentik admin interface

Recovery Access

If you need to reset a user's password, the easiest way is to create a recovery link from the Authentik admin UI:

- Navigate to Directory → Users and select the user

- Click Create Recovery Link — you can set how long the link stays valid (default: 30 minutes)

- Send the link to the user; they will be prompted to set a new password

As a CLI fallback, you can generate a recovery link via kubectl:

kubectl exec -n <namespace> deploy/<release-name>-authentik-server -- ak create_recovery_key 30 akadmin

This generates a recovery URL valid for 30 minutes.

Upgrades and Persistence

- User accounts, passwords, and settings are stored in the Authentik database and persist across upgrades

- Password changes made by users or admins will not be overwritten during upgrades

- The OIDC application configuration is managed declaratively and will be updated automatically during upgrades

Using Authentik behind a load balancer on a non-standard port

If your Pixee Enterprise Server is accessed through a load balancer on a non-standard port (e.g., port 5443), you must configure the reverse proxy settings for OIDC authentication to work correctly. See Reverse Proxy Settings — Non-Standard Port for setup instructions.

User Management¶

Users are managed through the Authentik admin interface at https://<your-domain>/authentik/if/admin/.

Creating Users¶

- In the admin interface, go to Directory → Users and click Create

- Fill in the user's details (username, display name, email) and click Create

- After creating the user, select them and click Create Recovery Link to generate a one-time password setup link — you can choose how long the link stays valid

- Send the recovery link to the user; they will be prompted to set their password on first visit

For more details, see the Authentik documentation on creating users and creating recovery links.

Editing and Deleting Users¶

- Go to Directory → Users, select a user, and update their details or deactivate their account

- Users created here can log in to Pixee Enterprise Server

Federating External Identity Providers¶

Authentik supports federating external identity providers so users can log in with their existing corporate credentials. After creating a source for your identity provider (see provider-specific sections below), you must add it to the login page.

Works behind a corporate egress proxy

Authentik honors the standard HTTP_PROXY / HTTPS_PROXY / NO_PROXY environment variables. When you configure an outbound HTTP proxy in the admin console, those values flow through to Authentik automatically, so back-channel calls to your identity provider (token exchange, userinfo, JWKS) route through the proxy. The no_proxy exclusion list is also honored if you need certain hosts to bypass the proxy.

Adding a Source to the Login Page¶

This step is the same for all federated identity providers. After creating a source in Authentik:

- In the Authentik admin interface, go to Flows and Stages → Flows

- Click on default-authentication-flow

- Go to the Stage Bindings tab

- Click Edit Stage on the default-authentication-identification stage

- Under Source settings, add your identity provider source to the Selected sources field

- Click Update to save

Users will now see the identity provider as a login option on the Authentik login page. Settings made on this stage (selected sources, user fields, recovery flow) are preserved across deploys — the Pixee Helm chart does not overwrite them. To redirect users directly to the identity provider instead of showing a selection page, see Auto-Redirect to Identity Provider. To show a self-service password recovery link, see Enable "Forgot Password?" Link on the Login Page.

Optional: Customize Username Derivation¶

By default, Authentik populates each new federated user's username from the OIDC preferred_username claim — typically the full UPN (e.g. john.smith@example.com). To derive a shorter username from the email or UPN claim instead (e.g., john.smith from john.smith@example.com):

- In the Authentik admin interface, go to Customization → Property Mappings → Create → OAuth Source Property Mapping

-

Configure the mapping with one of the following, depending on your identity provider:

- Name:

Google Email to Username -

Expression:

return {"username": info.get("email", "").split("@")[0]}

- Name:

Entra UPN to Username -

Expression:

# Username derivation, in order of preference. Falls through to # the enrollment-flow prompt if nothing is available. upn = info.get("preferred_username") or info.get("email") name = info.get("name", "").strip() if upn: # "first.last@example.com" → "first.last" username = upn.split("@")[0] elif name: # "First Last" → "first.last" username = name.lower().replace(" ", ".") else: # Nothing usable in the token. The enrollment flow's prompt # stage will ask the user to type one. username = None return { "username": username, "name": info.get("name", ""), "email": info.get("email", ""), }

The fallback to

namehandles environments where Microsoft's userinfo response omitspreferred_usernameandemail— e.g., when the App Registration in Azure has not been granted (or admin-consented to) theemailandprofileAPI permissions, or when the user account itself has no primary email set. - Name:

-

Click Finish

- Go to Directory → Federation and Social login → edit the OAuth source for that identity provider

- Under User Property Mappings, add the mapping you just created to the Selected User Property Mappings field

- Click Update

New users will now be assigned a username automatically based on the identity provider's claim.

Troubleshooting Property Mappings¶

Most installs work without any custom mapping — Authentik's built-in extraction reads preferred_username, name, and email from any standard OIDC source and populates the user record automatically. The mapping above is only needed if you want a different username derivation (e.g. stripping the @<host> suffix).

If sign-in fails with "Aborting write to empty username" or new user records have empty fields:

-

Inspect what the IdP actually returned, by temporarily adding a debug log line to the property mapping's Python expression.

Open the Authentik admin UI at

https://<your-domain>/authentik/if/admin/and navigate to Customization → Property Mappings. Click on the mapping you want to debug — the edit panel opens on the right. The form has several fields; the one you want is the multi-line Expression text area, which contains the Python code that runs during sign-in.Before (the expression you already have, for example):

upn = info.get("preferred_username") or info.get("email") return {"username": upn.split("@")[0] if upn else None}After — add

ak_logger.warning(...)as the first line. The existing code stays underneath, untouched:ak_logger.warning("oauth source claims", info_keys=list(info.keys()), info=dict(info)) upn = info.get("preferred_username") or info.get("email") return {"username": upn.split("@")[0] if upn else None}Click Update to save. Then attempt a sign-in — the expression runs and writes a structured JSON log line to the

authentik-serverpod's stdout.About

ak_loggerak_loggeris a Python name pre-injected into every Authentik property-mapping expression (alongsideinfo,properties,request). It's not a CLI tool or a script file; it only exists inside the Expression text area when Authentik evaluates the mapping. See Authentik's Sources expression property mappings reference for the full list of available names and other example debug snippets.Read the resulting log line from the

authentik-serverpod:# From the Pixee Enterprise Server virtual machine sudo ./pixee shell kubectl logs -n kotsadm \ -l app.kubernetes.io/component=server,app.kubernetes.io/name=authentik \ --tail=200 | grep "oauth source claims"kubectl logs -n <namespace> \ -l app.kubernetes.io/component=server,app.kubernetes.io/name=authentik \ --tail=200 | grep "oauth source claims"Replace

<namespace>with the namespace you installed the chart into (oftendefault).The matching line is a JSON object. The first positional argument to

ak_logger.warning(...)lands in theeventfield, keyword arguments become top-level fields, and theloggerfield is set to the mapping's Name — useful for filtering to a specific mapping withgrep '"logger":"<your-mapping-name>"'. -

Interpret the

infodict and act on what's missing:Symptom in infoLikely cause Fix Only subandpicturepresentThe source isn't requesting OpenID Connect scopes, or the IdP isn't granting them On the Authentik source, expand Protocol settings and set Additional Scopes to email profile. For Entra/Azure App Registrations, also confirm under API permissions thatemail,openid,profileare added under Microsoft Graph → Delegated AND show "Granted for \<tenant>" in the Status columnnamepresent,preferred_username/emailmissingThe user account has no UPN/email on the IdP side, or emailpermission isn't admin-consentedEither grant the missing permissions on the IdP, or fall back to deriving the username from namein the mapping (see the Entra tab above)All claims present but mapping still fails Likely a Python error in the expression Look for Failed to execute property mappinglines in the same logRemove the debug line from the mapping expression once the issue is identified — it's noisy at sign-in volume.

Google OAuth¶

Pixee Enterprise Server supports Google as an identity provider using OAuth 2.0 / OpenID Connect. This works with any Google account (personal Gmail or Google Workspace).

Step 1: Create OAuth Credentials in Google Cloud Console¶

- Go to Google Cloud Console and select or create a project

- Go to APIs & Services → Credentials → Create Credentials → OAuth client ID

- If prompted, configure the OAuth consent screen first:

- User Type: Internal (for Google Workspace) or External (for any Google account)

- App name:

Pixee Enterprise Server - Authorized domains: add your domain (e.g.,

getpixee.com)

- Create the OAuth client ID:

- Application type: Web application

- Name:

Pixee Enterprise Server - Authorized redirect URIs:

https://<your-domain>/authentik/source/oauth/callback/google/

- Copy the Client ID and Client Secret

Step 2: Create an OAuth Source in Authentik¶

- In the Authentik admin interface, go to Directory → Federation and Social login → Create → Google OAuth Source

- Configure:

- Name:

Google(orGoogle Workspace) - Slug:

google - Consumer Key: your Client ID from Step 1

- Consumer Secret: your Client Secret from Step 1

- Name:

- Click Create

After creating the source, add it to the login page. Authentik will populate each user's username from the OIDC preferred_username claim automatically; to shorten it (or if sign-in prompts the user for a username), see Optional: Customize Username Derivation.

Microsoft Entra ID (OAuth)¶

Pixee Enterprise Server supports Microsoft Entra ID (formerly Azure AD) as a federated identity provider through Authentik using OAuth 2.0 / OpenID Connect. This is the recommended way to integrate Entra ID — see the deprecation notice on the Microsoft Entra Authentication section below.

The slug appears in both steps and must match

The slug at the end of the Redirect URI in Step 1 and the Slug of the Authentik source in Step 2 must be identical, or the callback after a successful Entra login will fail. The examples below use entra-id; you can choose any slug, but use the same value in both places.

Step 1: Retrieve App Registration Details¶

Follow the Authentik documentation for Microsoft Entra ID OAuth integration to create the App Registration in Microsoft Entra ID. When setting the Redirect URI, use:

https://<your-domain>/authentik/source/oauth/callback/entra-id/

The slug at the end (entra-id) must match the slug you assign to the source in Authentik in Step 2.

After creating the app registration, on the Overview page, save the Application (client) ID — you will use it as the Consumer Key in Authentik:

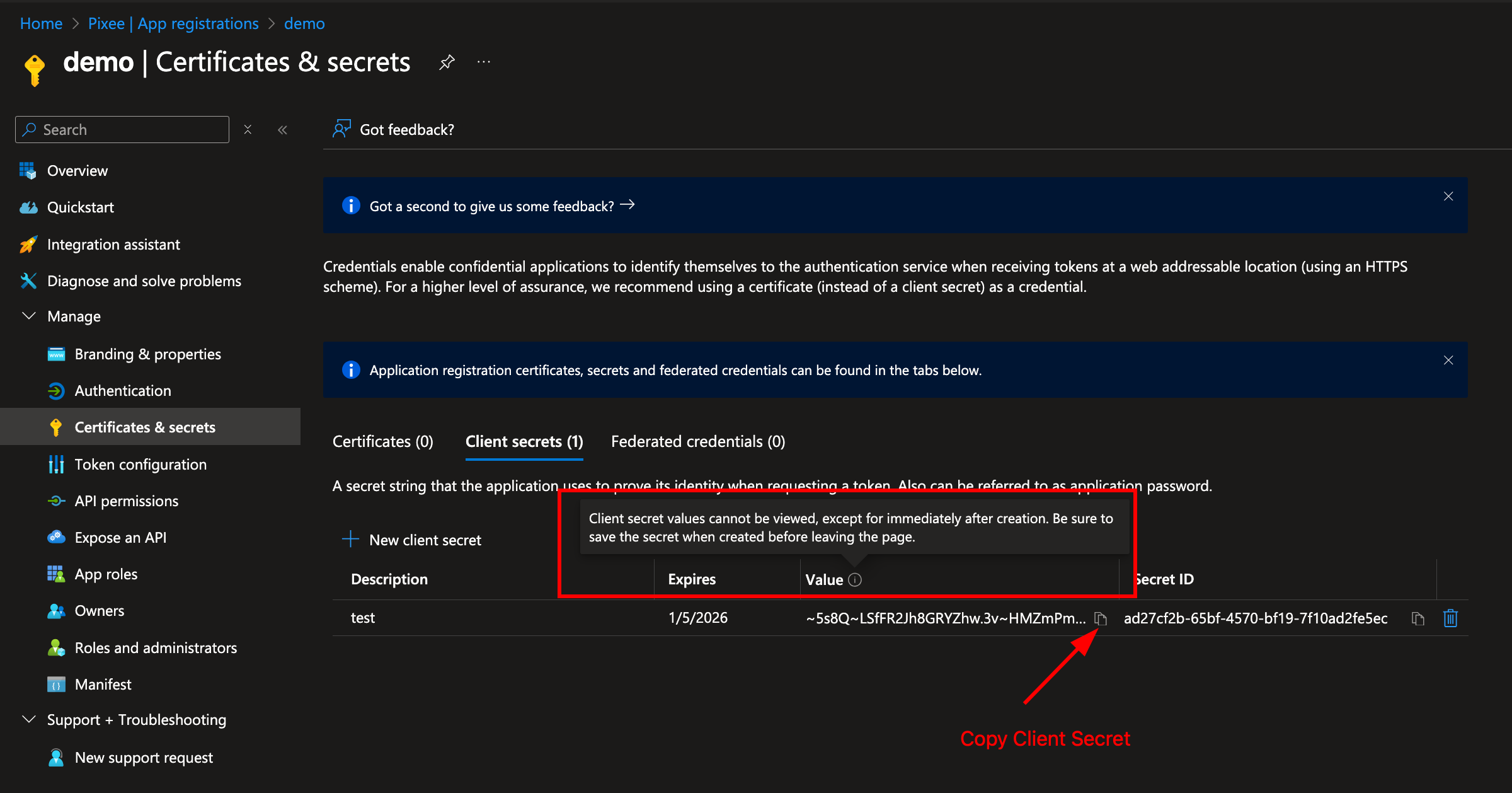

Navigate to Certificates & secrets in the left navigation bar and click New client secret:

Copy the secret Value immediately — it will not be shown again. This is used as the Consumer Secret in Authentik:

The OIDC endpoint URLs (authorization, token, JWKS) can be obtained from the Endpoints section of the App Registration:

Step 2: Create the Entra ID OAuth Source in Authentik¶

Follow the Authentik documentation for Microsoft Entra ID OAuth integration to create the source in Authentik, using the Application (client) ID and client secret value from Step 1.

Set the Slug to entra-id so the callback URL matches the redirect URI you registered in Step 1.

After creating the source, add it to the login page to enable it. Authentik will populate each user's username from Entra's preferred_username claim automatically; to shorten it (or if sign-in prompts the user for a username), see Optional: Customize Username Derivation. If sign-in fails or new user records arrive with empty email/name, see Troubleshooting Property Mappings.

Google Workspace (SAML)¶

Pixee Enterprise Server supports Google Workspace as an external identity provider using SAML.

Step 1: Create a SAML App in Google Workspace¶

- Go to Google Admin Console (

admin.google.com) → Apps → Web and mobile apps → Add app → Add custom SAML app - Enter a name (e.g.,

Pixee Enterprise Server) and click Continue - Copy the SSO URL and Certificate from Google — you will need these for the Authentik source configuration

-

Under Service Provider Details, set:

- ACS URL:

https://<your-domain>/authentik/source/saml/google/acs/ - Entity ID:

https://<your-domain>/authentik/source/saml/google/metadata - Name ID format:

EMAIL - Name ID:

Basic Information > Primary email

- ACS URL:

-

Check the Signed response checkbox

- Click Continue, then Finish

Enable the app for users

By default, new SAML apps in Google Workspace are OFF for everyone. You must turn it on:

- Click on the newly created app

- Click User access

- Set the service status to ON for everyone (or for the appropriate organizational units)

- Click Save

Step 2: Create a SAML Source in Authentik¶

Follow the Authentik documentation for Google Workspace SAML integration to create a SAML source using the SSO URL and Certificate from Step 1.

- In the Authentik admin interface, go to Directory → Federation and Social login → Create → SAML Source

- Set the Name (e.g.,

Google Workspace) and Slug (e.g.,google) - Set the Icon field to

/static/authentik/sources/google.svgso the Google logo appears on the login page - Set the SSO URL to the value copied from Google (e.g.,

https://accounts.google.com/o/saml2/idp?idpid=<your-idp-id>) - Set the Binding Type to Redirect (required for auto-redirect to work)

- Upload the Signing Certificate downloaded from Google

After creating the source, add it to the login page.

Troubleshooting¶

403 app_not_configured_for_user: This means either the Entity ID doesn't match or the app isn't enabled for the user. Verify that the Entity ID in Google Admin Console exactly matches the Authentik metadata URL (case-sensitive), and that the app is turned ON for the user's organizational unit.No Signature exists in the Response element: Enable the Signed response checkbox in the Google Admin Console SAML app under Service Provider Details.- "Permission denied" on login: Verify the Pixee application in Authentik is linked to the

pixeeprovider. Check Applications > Pixee Enterprise Server > Provider assignment.

Oracle Identity Domains (OAuth)¶

Pixee Enterprise Server supports Oracle Identity Domains as an external identity provider using OAuth/OIDC.

Step 1: Create a Confidential Application in Oracle¶

- Go to OCI Console > Identity & Security > Domains and select your domain

- Navigate to Integrated applications > Add application > Confidential Application

- Enter a name (e.g.,

Pixee Enterprise Server) and click Next - Under Client configuration, check "Configure this application as a client now"

- Set Allowed Grant Types to Authorization Code

-

Set Redirect URL to:

https://<your-domain>/authentik/source/oauth/callback/oracle/ -

Leave Token issuance policy set to All

- Click Finish, then Activate the application

- Copy the Client ID and Client Secret

Warning

Do not register Authentik as a Social Identity Provider in Oracle. Oracle should handle password authentication directly.

Step 2: Configure Authentik Federation¶

Follow the Authentik documentation for creating an OAuth Source using the OpenID Connect type.

When configuring the source, use the Client ID and Client Secret from Step 1. Your Oracle OIDC endpoint URLs follow this pattern (replace <your-idcs-instance> with your domain identifier):

- Authorization URL:

https://<your-idcs-instance>.identity.oraclecloud.com/oauth2/v1/authorize - Access token URL:

https://<your-idcs-instance>.identity.oraclecloud.com/oauth2/v1/token - Profile URL:

https://<your-idcs-instance>.identity.oraclecloud.com/oauth2/v1/userinfo

You can find these values in your Oracle OIDC discovery document at https://<your-idcs-instance>.identity.oraclecloud.com/.well-known/openid-configuration.

Note

All three endpoint URLs must be set explicitly on the source. Do not rely solely on the OIDC Well-known URL to auto-populate them.

After creating the source, add it to the login page.

Troubleshooting¶

- "Permission denied" on login: Verify the Pixee application in Authentik is linked to the

pixeeprovider. Check Applications > Pixee Enterprise Server > Provider assignment. - Redirect loop on Oracle login: Ensure the Authorization, Token, and Profile URLs are all explicitly set on the Oracle source. If any are blank, the redirect loops back to Authentik.

- Oracle shows Authentik login button: Remove any Social Identity Provider entries for Authentik from Oracle under Security > Identity providers.

LDAP¶

Pixee Enterprise Server supports LDAP directories as an authentication source through Authentik's LDAP federation. Users authenticate with their existing LDAP credentials — Authentik verifies passwords directly against the LDAP server and syncs user accounts automatically.

Prerequisites¶

Gather the following from your LDAP administrator:

- Server URL:

ldap://ldap.example.comorldaps://ldap.example.com(LDAPS recommended for production) - Bind DN: A service account DN for searching the directory (e.g.,

cn=svc-pixee,ou=service-accounts,dc=example,dc=com) - Bind Password: Password for the service account

- Base DN: Where to search for users (e.g.,

dc=example,dc=com) - User Object Filter: LDAP filter for user objects (e.g.,

(objectClass=person)) - Group Object Filter: LDAP filter for group objects (e.g.,

(objectClass=groupOfUniqueNames))

Network Access

The Pixee Enterprise Server cluster must be able to reach the LDAP server. The default ports are 389 (LDAP) and 636 (LDAPS), but non-standard ports are supported via the Server URI (e.g., ldap://ldap.example.com:3389). Verify network connectivity and firewall rules before configuring.

Step 1: Create an LDAP Source in Authentik¶

- In the Authentik admin interface, go to Directory → Federation and Social login → Create → LDAP Source

-

Configure the connection settings:

- Name: A descriptive name (e.g.,

Corporate LDAP) - Slug:

ldap(or a descriptive slug likecorporate-ldap) - Server URI: Your LDAP server URL (e.g.,

ldaps://ldap.example.com) - Bind CN: The service account DN

- Bind Password: The service account password

- Base DN: The search base for your directory (e.g.,

dc=example,dc=com)

- Name: A descriptive name (e.g.,

-

Configure the search settings:

- User Property Mappings: Select all the default LDAP property mappings (these map LDAP attributes to Authentik user fields)

- Group Property Mappings: Select the default LDAP group property mappings

- User object filter: LDAP filter for user objects (e.g.,

(objectClass=person)) — adjust for your directory - Group object filter: LDAP filter for group objects (e.g.,

(objectClass=groupOfUniqueNames)) — adjust for your directory - Group membership field:

member(oruniqueMemberdepending on your directory schema) - Object uniqueness field:

uid(adjust for your directory)

-

Under password settings, ensure the following are disabled:

- Update internal password on login: When enabled, Authentik stores a copy of the user's LDAP password internally. Disable this so that passwords are always verified directly against the LDAP server.

- User password writeback: When enabled, password changes in Authentik are written back to the LDAP server. Disable this unless you want users to change their LDAP password through Authentik.

-

Click Create

Step 2: Verify User Sync¶

After creating the LDAP source, trigger a sync and verify:

- Go to Directory → Federation and Social login, click on your LDAP source, and click Run sync

- Go to Directory → Users and verify that LDAP users have been imported

- Go to Directory → Groups and verify that LDAP groups have been imported

If users are not appearing after sync, check the sync logs:

- In the Authentik admin interface, go to Events → Logs

- Look for entries with action

configuration_error— these indicate sync failures with details about what went wrong -

Alternatively, check the Authentik worker pod logs directly:

kubectl logs -n <namespace> deploy/<release-name>-authentik-worker --tail=200 | grep -i "ldap\|configuration_error\|username"Common errors in the logs include:

- "Username was not set by propertymappings": Ensure a property mapping that sets the

usernamefield is selected on the LDAP source under User Property Mappings - "Could not find page in cache": The sync pagination timed out — try running the sync again, or increase the

ldap.task_timeout_hourssetting - "LDAPServerPoolExhaustedError": Authentik cannot connect to the LDAP server — check network connectivity and TLS settings

- "Username was not set by propertymappings": Ensure a property mapping that sets the

Sync Schedule

By default, Authentik syncs LDAP users and groups periodically (every 120 minutes). You can trigger a manual sync at any time from the LDAP source configuration page. Adjust the sync frequency in the LDAP source's Advanced settings if needed.

Step 3: Add LDAP to the Login Page¶

After creating the source, add it to the login page.

Once added, users will see an LDAP login option. When a user enters their LDAP credentials, Authentik authenticates them directly against the LDAP server.

How LDAP Authentication Works

When users log in via the LDAP source, Authentik performs a bind operation against the LDAP server using the user's credentials. With the recommended password settings above (both disabled), passwords are not stored in Authentik and are always verified directly against the LDAP server. If a user changes their LDAP password, the change takes effect immediately.

Troubleshooting¶

- "Connection refused" or timeout: Verify network connectivity from the cluster to the LDAP server. Check that the correct port (389 for LDAP, 636 for LDAPS) is open.

- "Invalid credentials" on bind: Verify the Bind DN and password.

- "Username was not set by propertymappings": Ensure a property mapping that sets the

usernamefield is selected on the LDAP source under User Property Mappings. - No users synced: Check the Base DN and user object filter. Use

ldapsearchto verify the filter returns results from outside the cluster. - Users synced but cannot log in: Ensure the LDAP source is added to the login page identification stage (see Adding a Source to the Login Page).

- "Permission denied" after LDAP login: Verify the Pixee application in Authentik is linked to the

pixeeprovider. Check Applications > Pixee Enterprise Server > Provider assignment. - TLS/certificate errors with LDAPS: If using a self-signed or internal CA certificate, you may need to add the CA certificate to the Authentik server's trust store.

Enable "Forgot Password?" Link on the Login Page¶

By default, the Authentik sign-in form does not show a "Forgot password?" link. Administrators can always trigger a password reset for any user from the Authentik admin console (Directory → Users → \<user> → Copy recovery link / Send recovery link via email) without any additional configuration — that path works out of the box.

To let end users self-service password recovery directly from the sign-in form, link the bundled pixee-recovery-flow to the identification stage:

- In the Authentik admin interface, go to Flows and Stages → Stages

- Edit default-authentication-identification

- Under Flow settings, set Recovery flow to Password Recovery (

pixee-recovery-flow) - Click Update to save

The "Forgot password?" link will now appear on the sign-in form. Clicking it walks the user through email-based password recovery.

SMTP required for email recovery

Self-service password recovery delivers a one-time-use reset link by email. Configure SMTP in the admin console (Config → SMTP / Email Settings) before enabling this link — otherwise users who click "Forgot password?" will hit a stage that cannot send mail. Administrator-triggered recovery via the admin console works regardless, but only the Send recovery link via email button needs SMTP; Copy recovery link always works.

Federated users

If users sign in through an external identity provider (Entra, Okta, etc.), they reset their password at the upstream provider — Authentik's password recovery only applies to local Authentik accounts (e.g. akadmin, breakglass accounts). For federated-only installs you can skip this step.

Auto-Redirect to Identity Provider¶

By default, when a federated identity provider is added as a source, users see the Authentik login page with both username/password fields and the identity provider button. To skip this page and redirect users directly to the identity provider, configure the identification stage to auto-redirect:

- In the Authentik admin interface, go to Flows and Stages → Stages

- Edit default-authentication-identification

- Under User fields, deselect all fields (remove Username, Email, etc.)

- Under Sources, ensure only the identity provider source is selected

- Ensure the Passwordless flow field is not set (empty/none) — auto-redirect only works when this is unset

- Click Update to save

With no user fields and exactly one source configured, Authentik automatically redirects users to the identity provider without showing the login page.

SAML Binding Type

For SAML sources, ensure the Binding Type on the source is set to Redirect rather than POST. With Redirect binding, Authentik performs a direct HTTP 302 to the identity provider. POST binding requires an intermediate page to submit the SAML request form.

Multiple Identity Providers

If more than one source is configured on the identification stage, auto-redirect is disabled and users will see a source selection page instead.

Direct Login for Administrators¶

When auto-redirect is enabled, administrators who need to log in with username/password (e.g., the akadmin account) can no longer use the default login page. Create a separate authentication flow for direct login:

Create an Identification Stage¶

- Go to Flows and Stages → Stages → Create

- Select Identification as the stage type

- Configure:

- Name:

direct-authentication-identification - User fields: select Username

- Sources: leave empty (no identity provider buttons)

- Password stage: select

default-authentication-password(embeds the password field on the same page)

- Name:

- Click Create

Create the Direct Authentication Flow¶

- Go to Flows and Stages → Flows → Create

- Configure:

- Name:

Direct Authentication Flow - Slug:

direct-authentication-flow - Designation: Authentication

- Required authentication level: Require no authentication

- Name:

- Click Create

Bind Stages to the Flow¶

- Click on the direct-authentication-flow flow to open it

- Go to the Stage Bindings tab

-

Click Bind existing Stage and add the following bindings:

Order Stage 10 direct-authentication-identification(created above)30 default-authentication-mfa-validation(built-in)100 default-authentication-login(built-in)Note

The

default-authentication-passwordstage is not bound separately because it is already embedded in the identification stage (configured above). Adding it as a separate binding would prompt for the password twice. -

Administrators can now log in directly at:

https://<your-domain>/authentik/if/flow/direct-authentication-flow/

Google Authentication¶

Pixee Enterprise Server supports Google authentication using OAuth 2.0.

Configuration¶

You must set up a new OAuth client and retrieve the client ID and client secret. See the Google Cloud Console documentation for more information on creating a new OAuth 2.0 Client ID.

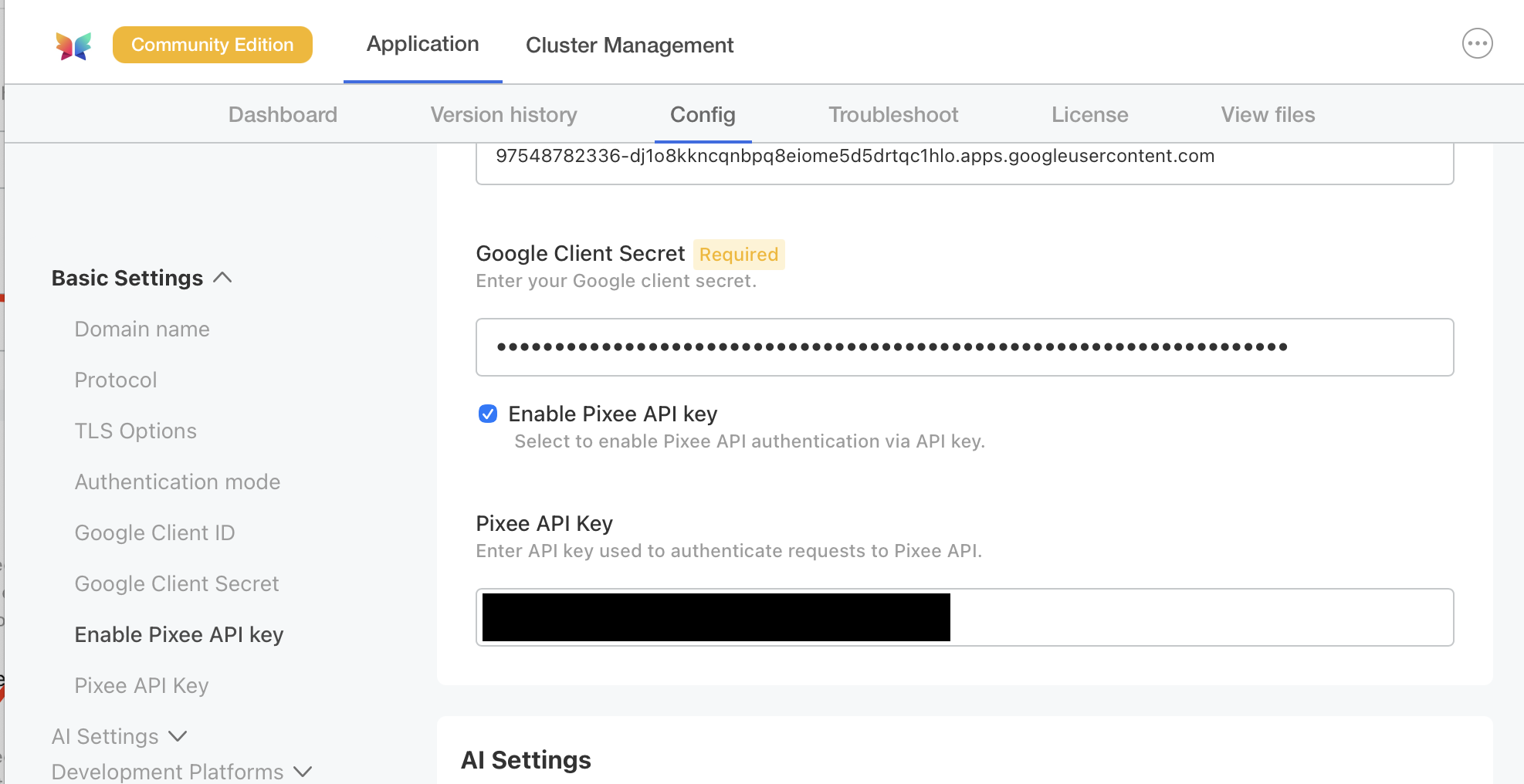

To configure Google authentication in Embedded Cluster deployments follow:

Navigate to the admin console, select the Config tab, then go to the Basic Settings section.

Under Authentication mode, select Google as the provider and provide a client ID and client secret.

To configure Google authentication in Helm Deployment follow:

To enable Google authentication, set the following in your values.yaml:

global:

pixee:

access:

oidc:

client:

provider: google

id: '<your Google oidc client id>'

secret: '<your Google oidc client secret>'

Microsoft Entra Authentication¶

Deprecated — use Authentik federation instead

Direct Microsoft Entra authentication is deprecated and will be removed in a future release. New deployments should use the embedded Authentik identity provider with Entra ID federation — see Microsoft Entra ID (OAuth) under Federating External Identity Providers. Existing deployments using direct Entra authentication should plan to migrate.

Outbound HTTP proxy not supported in this mode. The platform's OIDC client (Quarkus / Vert.x) does not honor either JVM proxy properties or the no_proxy exclusion list when reaching Microsoft Entra. If your environment requires a corporate egress proxy, OIDC discovery and token exchange will fail. The Authentik-federated path (linked above) is built on Python requests and respects HTTP_PROXY / HTTPS_PROXY / NO_PROXY natively, so it works behind a corporate proxy with the standard proxy settings.

Pixee Enterprise Server supports Microsoft Entra authentication with single tenant applications.

Create an App Registration¶

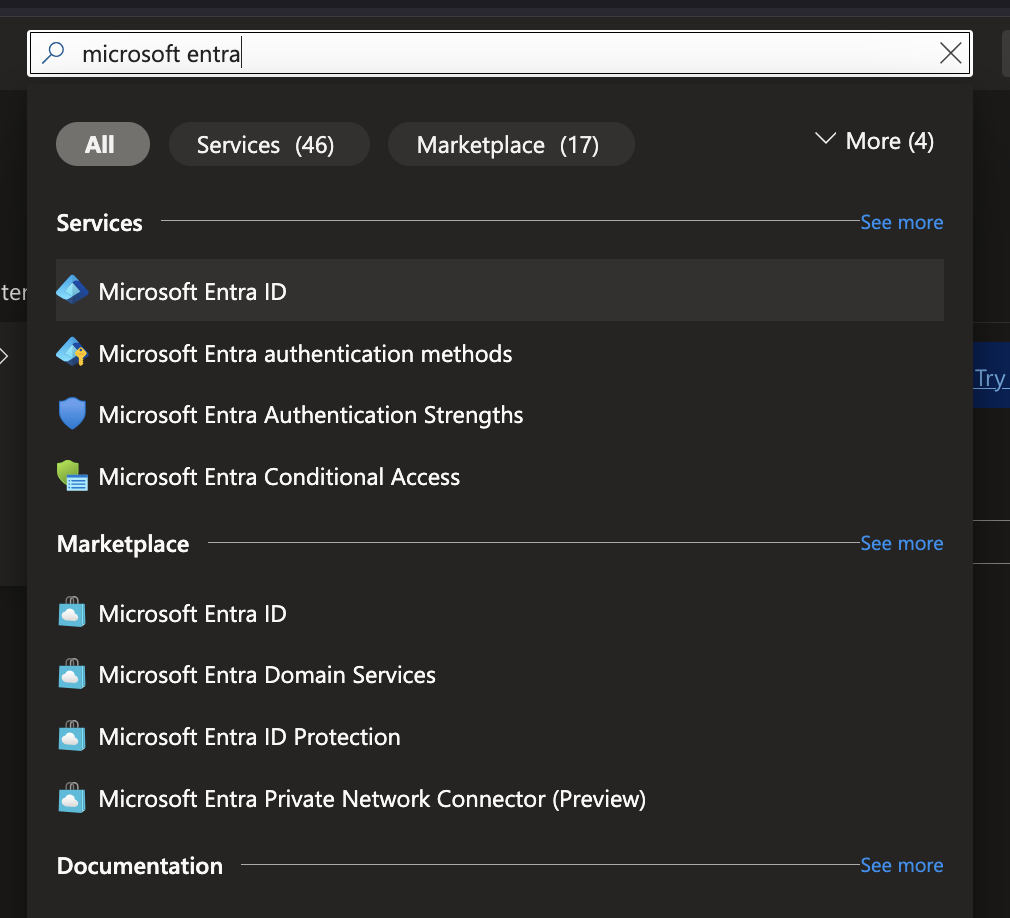

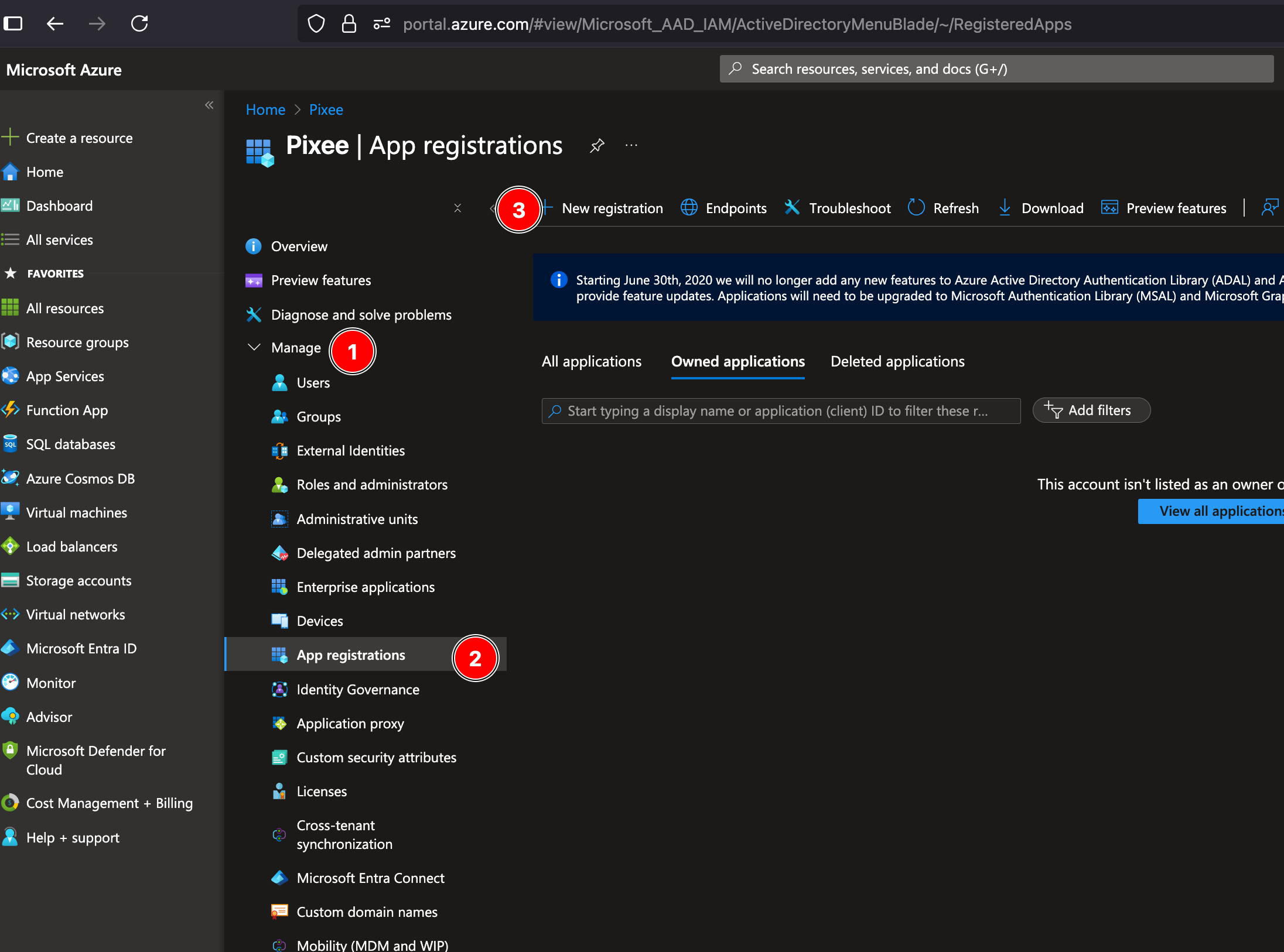

In order to set up OIDC for Microsoft you need to go to your Microsoft Azure Portal,

and search for Microsoft Entra ID. Select Microsoft Entra ID under Services.

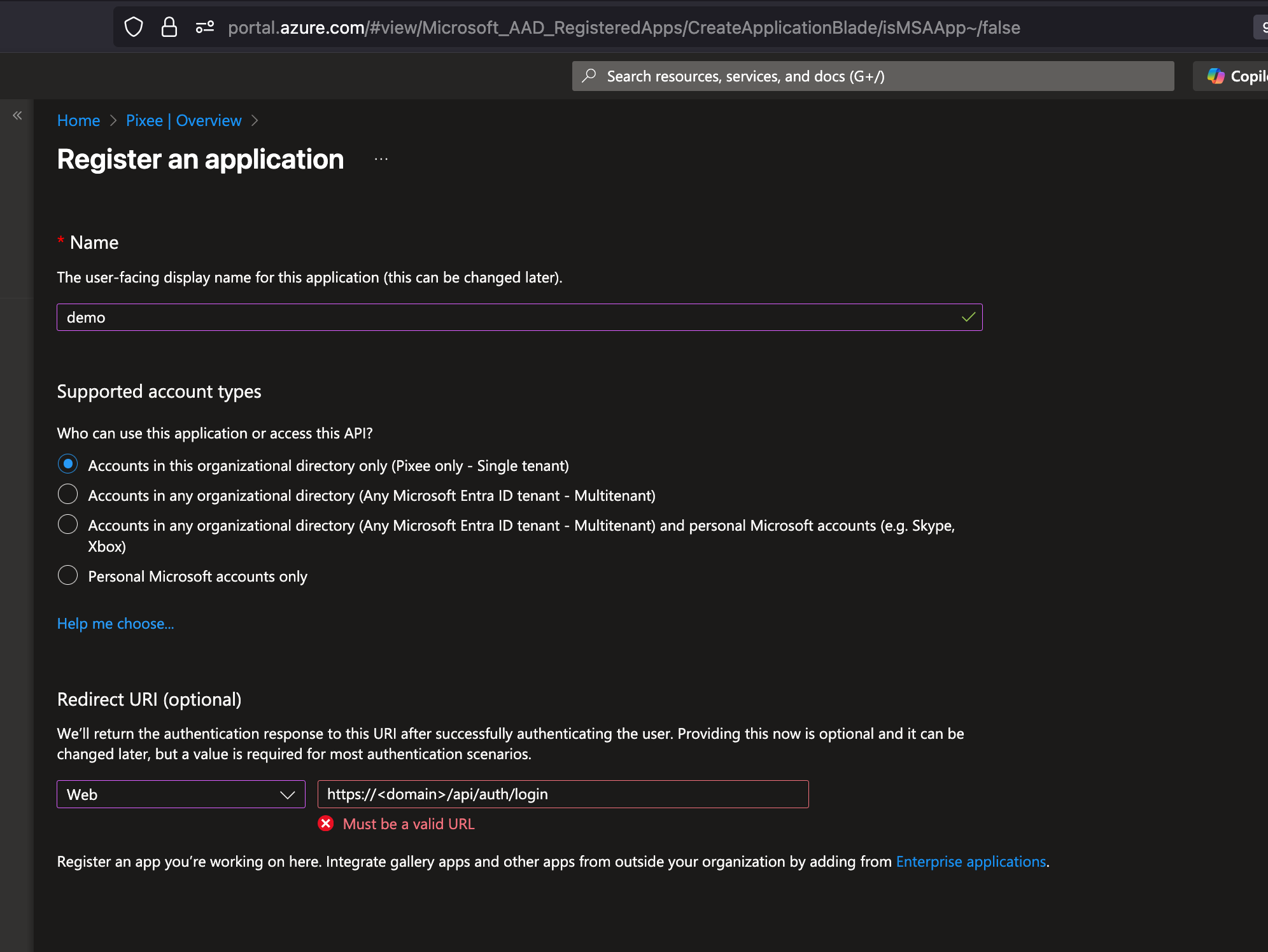

Look for Manage on the left navigation bar, click on App registrations then click on New registration:

Fill in your application name, select the Single tenant option and add a Web Redirect URI as https://<domain>/api/auth/login, then click on Register:

Retrieve App Registration Details¶

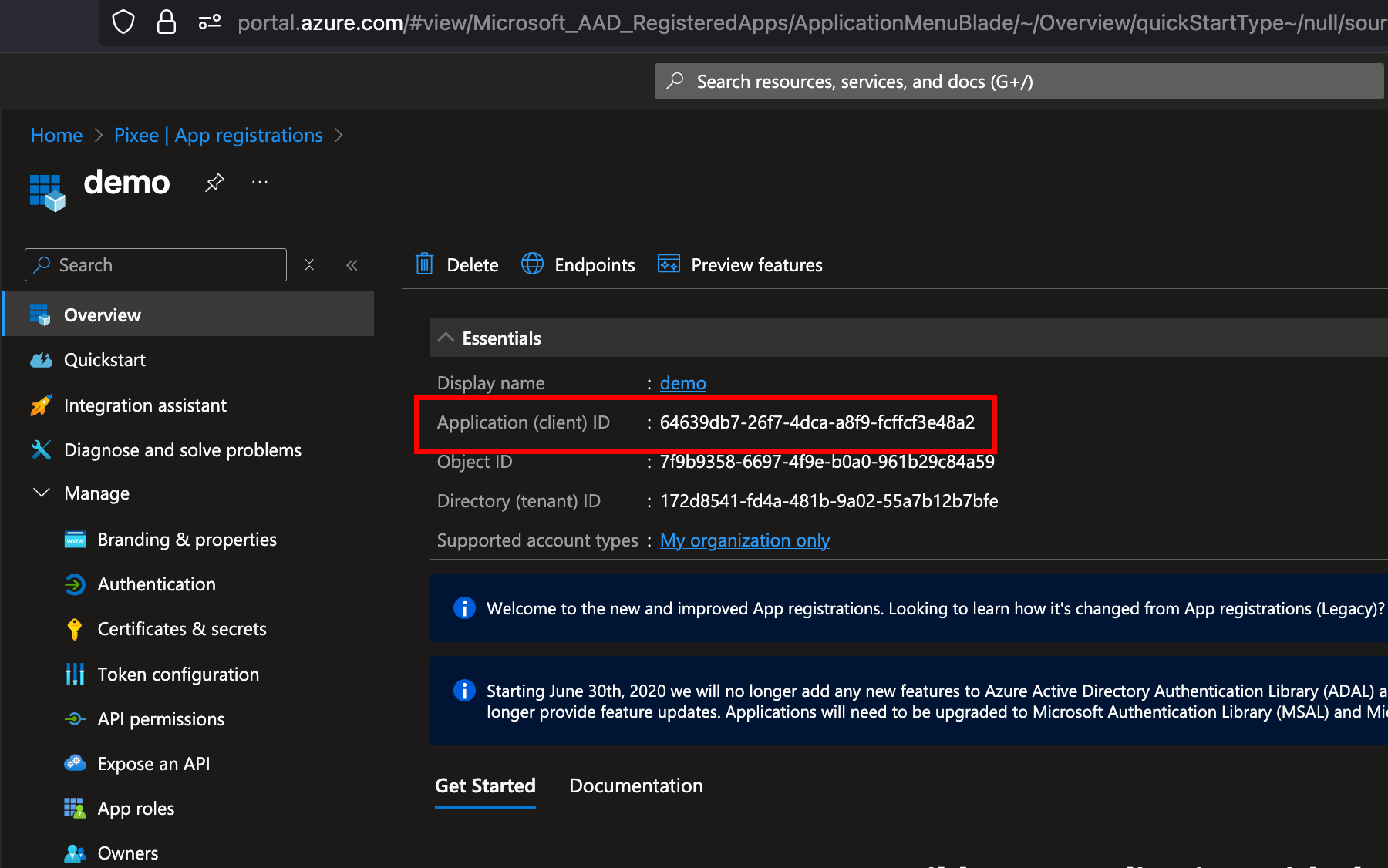

After creating the app registration, you will be redirected to the app's overview page. On this page you will find:

- Application (client) ID: Save this ID, which you will use as the

ClientIDin your Pixee configuration.

Then navigate to Certificates & secrets in the left navigation bar, and click on New client secret to create a new secret:

Client Secret: After creating the client secret, copy the value immediately as it will not be shown again. This value will be used as the ClientSecret in your Pixee configuration:

Authority URL: can be obtained from the "Endpoints" section of the App Registration:

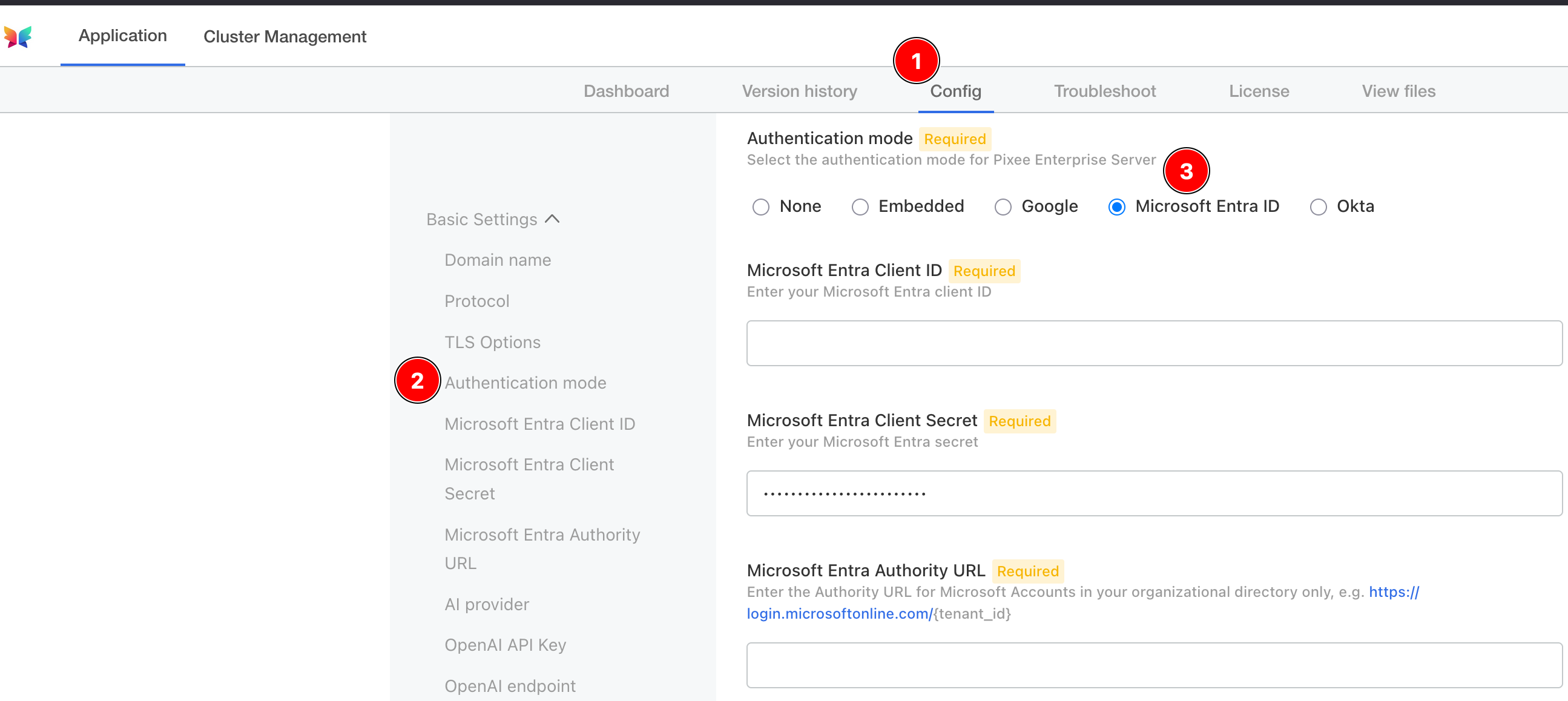

To configure Microsoft Entra authentication in Embedded Cluster deployments follow:

Navigate to the admin console, select the Config tab, then go to the Basic Settings section.

Under Authentication mode, select Microsoft Entra as the provider and provide a client ID, client secret, and authority URL.

To configure Microsoft Entra authentication in Helm Deployment follow:

To enable Microsoft authentication, set the following in your values.yaml:

global:

pixee:

access:

oidc:

client:

provider: microsoft

id: '<your Microsoft oidc client id>'

secret: '<your Microsoft oidc client secret>'

authServerUrl: '<your Microsoft oidc auth server url, such as https://login.microsoftonline.com/{tenant_id}>'

Okta Authentication¶

Pixee Enterprise Server supports Okta OIDC authentication.

Configuration¶

You must create a new OIDC App Integration from the Okta Admin Console and retrieve the client ID, client secret, and Okta URL:

- Log in to the Okta Admin Console as an administrator.

- Navigate to Applications > Applications > Add App Integration.

- Select OIDC - OpenID Connect, set Application Type to Web Application, and then click Next.

- Configure the following required settings:

- App Integration Name: pixee

- Sign-in redirect URIs: https://< domain >/api/auth/login

- Under Assignments, select how you'd like to control access to Pixee. Allow everyone in your organization to access or select a group to limit access.

- Click Save.

- Under Client Credentials, take note of the Client ID. This value will be required in the Pixee Admin Console.

- Under CLIENT SECRETS, click the Copy to clipboard next to the secret and take note of the value, it will also be required in the Pixee Admin Console.

The Okta URL is of the form https://{tenant-name}.okta.com. You can verify this is correct by viewing the well-known OpenID Connect configuration at https://{tenant-name}.okta.com/.well-known/openid-configuration.

To configure Okta authentication in Embedded Cluster deployments follow:

Navigate to the admin console, select the Config tab, then go to the Basic Settings section.

Under Authentication mode, select Okta as the provider and provide a client ID, client secret, and Okta URL.

To configure Okta authentication in Helm Deployment follow:

To enable Okta authentication, set the following in your values.yaml:

global:

pixee:

access:

oidc:

client:

provider: okta

id: '<your Okta oidc client id>'

secret: '<your Okta oidc client secret>'

authServerUrl: '<your Okta oidc auth server url, such as https://{tenant-name}.okta.com>'

Development Platform Integrations¶

This section covers integrating Pixee Enterprise Server with various development platforms and source code management systems.

Azure DevOps Integration¶

Azure DevOps integration allows Pixee Enterprise Server to work with your Azure DevOps repositories and requires a personal access token with specific permissions.

Requirements¶

Azure DevOps integration requires:

- Your Azure DevOps organization name

- A personal access token with a custom scope that includes full Code access (not "Full access" which grants broader permissions than necessary)

Info

The webhook user and password are optional properties for Azure DevOps webhook authentication. If configured, these credentials will be used to authenticate incoming webhook requests from Azure DevOps.

Configuration¶

For embedded cluster deployments, navigate to the admin console, Config tab and then to the Development Platforms section.

Select the Azure DevOps checkbox to enable Azure DevOps integration.

Enter the following information in the configuration fields:

- Organization: Your Azure DevOps organization name

- Token: Your personal access token with full Code access

- Webhook credentials (optional): Username and password for webhook authentication if desired

For Helm deployments, add the following to your values.yaml:

platform:

scm:

azure:

organization: "<your azure devops organization name>"

token: "<your personal access token>"

# Optional: For webhook authentication

# webhook:

# user: "<your webhook username>"

# password: "<your webhook password>"

# Use existing secret instead of creating one

existingSecret: ""

secretKeys:

# -- The secret key containing the token

tokenKey: "token"

# -- The secret key containing the webhook password

webhookPasswordKey: "webhookPassword"

BitBucket Cloud Integration¶

BitBucket Cloud integration allows Pixee Enterprise Server to work with your BitBucket repositories and requires account credentials with specific permissions.

For security, it is recommended to create and use an API token for BitBucket Cloud integration rather than using personal credentials. See the BitBucket API Token documentation for information on creating an API token.

Note

BitBucket API tokens require your account's email address for API authentication, while Git operations use your username. Make sure to configure both values.

Requirements¶

BitBucket Cloud integration requires:

- A BitBucket Cloud username (used for Git operations)

- Your BitBucket account email address (used for API authentication)

- An API token with the following scopes:

read:user:bitbucketread:workspace:bitbucketread:repository:bitbucketread:pullrequest:bitbucketwrite:repository:bitbucketwrite:pullrequest:bitbucket

Configuration¶

For embedded cluster deployments, navigate to the admin console, Config tab and then to the Development Platforms section.

Select the BitBucket checkbox to enable BitBucket Cloud integration.

Enter the following information in the configuration fields:

- Username: Your BitBucket Cloud username (used for Git operations)

- Email Address: Your BitBucket account email address (used for API authentication)

- API Token: Your BitBucket API token

For Helm deployments, add the following to your values.yaml:

platform:

scm:

bitbucket:

username: "<your bitbucket cloud username>"

emailAddress: "<your bitbucket account email address>"

apiToken: "<your bitbucket api token>"

# Use existing secret instead of creating one

existingSecret: ""

secretKeys:

# -- The secret key containing the API token

apiTokenKey: "apiToken"

GitHub Integration¶

GitHub integration allows Pixee Enterprise Server to work with GitHub.com or self-hosted GitHub Enterprise Servers and requires a custom GitHub app to be created.

Pixee Enterprise Server is able to integrate with GitHub.com and self-hosted GitHub Enterprise Servers. If you are self-hosting a GitHub enterprise server or otherwise have configured GitHub enterprise server on a domain other than github.com, see the Configuration section below for instructions on setting your custom GitHub domain.

GitHub integration comes in the form of a custom GitHub app, which will be needed to configure GitHub integration in Pixee Enterprise Server. A custom GitHub app configures webhook events, event destination, and permissions for enhanced GitHub integration. In creating this application, we have followed the best practices provided by GitHub.

Info

Network communication between your GitHub (.com or Enterprise Server) and Pixee Enterprise Server must exist. This can vary based on the deployment configuration of GitHub Enterprise Server and Pixee Enterprise Server.

GitHub App Setup¶

Unless otherwise instructed, leave the existing default values provided by GitHub.

- Go to https://github.com/settings/apps, replace

github.comwith your own private GitHub host as needed. - Click

New GitHub Appbutton. - Set the

GitHub App nameto something unique (i.e. "AcmePixeebotApp"), save this value for later. - Set

Homepage URLto anything (i.e. "https://pixee.ai"), this can be updated later. - Set the

Callback URLto the URL of your host/cluster in the following format http://acme.getpixee.com/api/auth/login. - Check

Request user authorization (OAuth) during installation. - Check

ActiveunderWebhook. - Set

Webhook URL, to the URL of your host/cluster in the following format http://acme.getpixee.com/github-event. - Set

Webhook Secretto a secret value, a randomly generated string will work (save this for later). -

Set these

Repository permissions:Repository permissions Access Checks Read and write Code scanning alerts Read and write Commit statuses Read and write Contents Read and write Dependabot alerts Read and write Issues Read and write Metadata Read-only Pull Requests Read and write Workflows Read and write -

Set these

Organization permissions:Organization permission Access Members Read-only -

Set these

Account permissions:Account permissions Access Email addresses Read-only -

Check to

Subscribe to eventsfor the following:- Code scanning alert

- Check Run

- Create

- Dependabot alert

- Issue Comment

- Issues

- Pull request

- Pull request review

- Pull request review comment

- Pull request review thread

- Push

- Repository

-

For

Where can this GitHub App be installed?selectOnly on this account, this can be updated later. - Click

Create GitHub Appbutton. - Once the GitHub App is created, you should see the GitHub App configuration page.

- Copy

App IDand save for later. - Scroll down and click

Generate a private key, download the private key file and save for later.

Configuration¶

Select your installation method for instructions.

For embedded cluster deployments, navigate to the admin console, Config tab and then to the Development Platforms section.

Select the GitHub checkbox to enable GitHub integration.

If you are self-hosting a GitHub enterprise server or otherwise have configured GitHub enterprise server on a domain other than github.com, be sure to select custom domain for the GitHub domain setting in the Pixee Enterprise Server admin console and enter your custom GitHub domain.

After creating up your GitHub App, insert the following data into the appropriate fields on the Pixee Enterprise Server admin console configuration screen:

- app name

- app id

- app private key (downloaded from browser)

For Helm deployments, add the following to your values.yaml:

platform:

github:

appName: "<your custom GitHub app name>"

appId: "<your custom GitHub app id>"

appWebhookSecret: "<your custom GitHub app webhook secret>"

appPrivateKey: |

-----BEGIN RSA PRIVATE KEY-----

...

-----END RSA PRIVATE KEY-----

# -- Use an existing secret instead of creating one

existingSecret: ""

secretKeys:

# -- The secret key containing the appWebhookSecret

appWebhookSecretKey: appWebhookSecret

# -- The secret key containing the appPrivateKey

appPrivateKeySecretKey: appPrivateKey

# For GitHub Enterprise hosted at domains other than github.com, uncomment set your GitHub Enterprise url:

# url: "https://github.your-company.com"

Tip

Be sure to check the indentation is correct for each line of the GitHub app private key

Verification¶

If you enabled GitHub integration and created a custom GitHub app, you can verify your GitHub App connectivity by checking your GitHub App's event log. This log can be accessed through your GitHub App's settings under the "Advanced" section. See GitHub.com for more information.

GitLab Integration¶

Pixee Enterprise Server is able to integrate with https://gitlab.com as well as self-hosted GitLab servers. If you have a self-hosted GitLab server, see the Configuration section below for instructions on setting your custom GitLab base URI.

Requirements¶

GitLab integration requires:

- A GitLab personal access token with the following scopes:

apiread_userread_repositoryread_apiwrite_repositoryai_featuresread_registryread_virtual_registry

- (Optional) Self-hosted GitLab server base URI if not using GitLab.com

- (Optional) Webhook secret for GitLab webhook integration

Tip

It is recommended to use a GitLab service account to generate the personal access token rather than a personal user account. Service accounts are not tied to individual users, which avoids disruption if a team member leaves or their account is modified. The service account should be granted access to the groups or projects that Pixee will manage.

Configuration¶

For embedded cluster deployments, navigate to the admin console, Config tab and then to the Development Platforms section.

Select the GitLab checkbox to enable GitLab integration.

Enter the following information in the configuration fields:

- Token: Your GitLab personal access token with the required scopes listed above

- Base URI (optional): Your self-hosted GitLab server URL

- Webhook secret (optional): Secret for webhook authentication

For Helm deployments, add the following to your values.yaml:

platform:

scm:

gitlab:

# For self-hosted GitLab, add:

# baseUri: "https://gitlab.your-company.com"

token: "your-personal-access-token" # requires scopes: api, read_user, read_repository, read_api, write_repository, ai_features, read_registry, read_virtual_registry

# If you are using GitLab webhooks, provide the webhook secret:

# webhookSecret: "your-gitlab-webhook-secret"

# Use existing secret instead of creating one

existingSecret: ""

secretKeys:

# -- The secret key containing the token

tokenKey: "token"

# -- The secret key containing the webhookSecret

webhookSecretKey: "webhookSecret"

Webhook Configuration¶

If you want to use webhooks to notify Pixee of build events, you'll need to configure webhooks in your GitLab project.

The webhook URI should be: https://<example-pixee-server.com>/api/v1/integrations/gitlab-default/webhooks

For detailed instructions on configuring GitLab webhooks, see the GitLab Webhook Documentation.

The webhook secret configured in Pixee Enterprise Server should match the secret token configured in your GitLab webhook settings.

Security Integrations¶

This section covers integrating Pixee Enterprise Server with various security scanning and analysis tools.

HCL AppScan Integration¶

HCL AppScan integration allows Pixee Enterprise Server to communicate with your existing AppScan security scans to analyze, react to, fix and update issues.

Requirements¶

HCL AppScan integration requires:

- An AppScan Base URI (defaults to https://cloud.appscan.com)

- An AppScan Key ID and Key Secret

- The key ID must be attached to a role with permissions to post comments on issues

- Webhook authentication credentials:

- Basic Auth (recommended): Username and password for HTTP Basic authentication on incoming webhooks.

- Webhook Secret (deprecated): The secret is embedded in the webhook URL path. This method is deprecated in favor of Basic Auth.

Configuration¶

For embedded cluster deployments, navigate to the admin console, Config tab and then to the Security Tool section.

Select the AppScan checkbox to enable HCL AppScan integration.

Enter the following information in the configuration fields:

- Base URI: Your AppScan base URI (defaults to https://cloud.appscan.com)

- Key ID: Your AppScan key ID with comment permissions

- Key Secret: Your AppScan key secret

- Webhook Authentication Mode: Choose your authentication method:

- Basic Auth (Username/Password) (recommended): Enter username and password for HTTP Basic authentication on incoming webhooks.

- Webhook Secret (deprecated): Enter a shared secret that will be embedded in the webhook URL. This method is deprecated in favor of Basic Auth.

For Helm deployments, add the following to your values.yaml:

platform:

pixeebot:

appscan:

apiKeyId: "your-appscan-key-id"

apiKeySecret: "your-appscan-key-secret"

webhook:

user: "your-webhook-username"

password: "your-webhook-password"

# Use existing secret instead of creating one

existingSecret: ""

secretKeys:

apiKeySecretKey: "apiKeySecret"

webhookUserKey: "webhookUser"

webhookPasswordKey: "webhookPassword"

Webhook Configuration¶

To receive notifications from AppScan, you'll need to configure two webhooks in your AppScan presence server using Basic Auth.

Creating the Authorization Header¶

First, generate the Base64-encoded authorization header using the webhook username and password you configured in Pixee Enterprise Server:

echo -n "username:password" | base64

This will output a Base64 string like dXNlcm5hbWU6cGFzc3dvcmQ=. Prepend Basic to create the full authorization header value.

Webhook 1: Scan Execution Completed¶

This webhook notifies Pixee Enterprise Server when an AppScan scan completes. Use the AppScan Webhook API to create it with the following request body:

{

"AuthorizationHeader": "Basic <your-base64-encoded-credentials>",

"PresenceId": "<your-presence-id>",

"Uri": "https://<your-pixee-server>/api/v1/integrations/appscan-default/webhooks/_/ScanExecutionCompleted/{SubjectId}",

"Global": true,

"AssetGroupId": "<your-asset-group-id>",

"Event": "ScanExecutionCompleted"

}

Webhook 2: New Patch Request¶

This webhook notifies Pixee Enterprise Server when a new patch is requested in AppScan. Use the AppScan Webhook API to create it with the following request body:

{

"AuthorizationHeader": "Basic <your-base64-encoded-credentials>",

"PresenceId": "<your-presence-id>",

"Uri": "https://<your-pixee-server>/api/v1/integrations/appscan-default/webhooks/CreatePatch",

"Global": true,

"AssetGroupId": "<your-asset-group-id>",

"Event": "NewPatchRequest",

"RequestMethod": "POST",

"RequestBody": "{\"patch_id\": \"{SubjectId}\"}",

"ContentType": "application/json"

}

Placeholder Reference¶

Replace the following placeholders in both webhooks:

<your-base64-encoded-credentials>: The Base64-encodedusername:passwordstring from the command above<your-presence-id>: Your AppScan presence server ID<your-pixee-server>: Your Pixee Enterprise Server hostname<your-asset-group-id>: Your AppScan asset group ID

For detailed instructions on configuring AppScan webhooks, refer to the AppScan Webhook API Documentation.

Arnica Integration¶

Arnica integration allows Pixee Enterprise Server to communicate with your existing Arnica security platform to analyze, react to, fix and update issues.

Requirements¶

Arnica integration requires:

- An Arnica API key

Configuration¶

For embedded cluster deployments, navigate to the admin console, Config tab and then to the Security Tool section.

Select the Arnica checkbox to enable Arnica integration.

Enter the following information in the configuration fields:

- API Key: Your Arnica API key

For Helm deployments, add the following to your values.yaml:

platform:

arnica:

apiKey: "your-arnica-api-key"

# Use existing secret instead of creating one

existingSecret: ""

secretKeys:

# -- The secret key containing the apiKey

apiKeyKey: "apiKey"

Black Duck Integration¶

Black Duck integration allows Pixee Enterprise Server to communicate with your existing Black Duck security scans to analyze, react to, fix and update issues.

Requirements¶

Black Duck integration requires:

- A Black Duck access token

Configuration¶

For embedded cluster deployments, navigate to the admin console, Config tab and then to the Security Tool section.

Select the Black Duck checkbox to enable Black Duck integration.

Enter the following information in the configuration fields:

- Access Token: Your Black Duck access token

For Helm deployments, add the following to your values.yaml:

platform:

blackduck:

accessToken: "your-blackduck-access-token"

# Use existing secret instead of creating one

existingSecret: ""

secretKeys:

# -- The secret key containing the accessToken

accessTokenKey: "accessToken"

SonarQube Integration¶

SonarQube integration allows Pixee Enterprise Server to communicate with your existing SonarQube security scans to analyze, react to, fix and update issues.

Pixee Enterprise Server can integrate with both SonarQube Cloud and SonarQube Server.

Requirements¶

SonarQube integration requires:

- A SonarQube personal access token with access to retrieve issues and hotspots for the projects that will be integrated with Pixee Enterprise Server

- A webhook secret for receiving scan notifications. When creating the webhook, set the URL to

https://<domain>/api/v1/integrations/sonar-default/webhooks.

Configuration¶

For embedded cluster deployments, navigate to the admin console, Config tab and then to the Security Tool section.

Select the SonarQube checkbox to enable SonarQube integration. If you host your own SonarQube server instance, select SonarQube server and enter your SonarQube Server base URI and SonarQube GitHub app if applicable.

For all SonarQube integration types (Server or Cloud) enter the following information in the configuration fields:

- Personal Access Token: Your SonarQube personal access token

- Webhook Secret: Secret for webhook authentication

For Helm deployments, add the following to your values.yaml:

platform:

sonar:

# For SonarQube Server integration, provide your SonarQube server baseUri:

# baseUri: "https://sonarqube.your-company.com"

# If you have a custom Sonar GitHub app, provide the GitHub app name:

# gitHubAppName: "your-sonarqube-github-app-name"

token: "your-sonarqube-personal-access-token"

webhookSecret: "your-sonarqube-webhook-secret"

# -- Use an existing secret instead of creating one

existingSecret: ""

secretKeys:

# -- The secret key containing the token

tokenKey: "token"

# -- The secret key containing the webhookSecret

webhookSecretKey: "webhookSecret"

Advanced Filtering Options¶

SonarQube integration supports advanced filtering to control which findings are retrieved and processed.

Software Quality Filtering¶

Control which types of findings to retrieve:

In the admin console Security Tool section, use the following checkboxes:

- Exclude Maintainability Findings: Select to exclude maintainability findings (code smells), retrieving only security-related issues

- Exclude Reliability Findings: Select to exclude reliability findings (bugs), retrieving only security-related issues

platform:

sonar:

# Exclude maintainability findings (code smells)

excludeMaintainabilityFindings: true

# Exclude reliability findings (bugs)

excludeReliabilityFindings: true

CWE Filtering¶

Filter findings by specific Common Weakness Enumeration (CWE) identifiers:

In the admin console Security Tool section:

- CWE IDs: Enter a comma-separated list of CWE IDs to filter findings (e.g.,

79,89,502,918). No spaces. When set, this overrides "Filter CWE Top 25" and "Additional CWE IDs". - Filter CWE Top 25 (Deprecated): Select to retrieve only findings from the SANS CWE Top 25 list. Ignored when "CWE IDs" is set.

- Additional CWE IDs (Deprecated): Enter comma-separated CWE IDs to include (e.g.,

611,918,1234). No spaces. Ignored when "CWE IDs" is set.

platform:

sonar:

# Explicit CWE ID list (overrides filterCweTop25 and additionalCweIds)

cweIds: "79,89,502,918"

# Deprecated - use cweIds instead

# filterCweTop25: true

# additionalCweIds: "611,918,1234"

Example Configurations¶

Custom CWE list (recommended):

platform:

sonar:

cweIds: "79,89,502,918"

excludeMaintainabilityFindings: true

excludeReliabilityFindings: true

Security + Reliability (no code smells):

platform:

sonar:

excludeMaintainabilityFindings: true

Legacy: SANS Top 25 only (deprecated):

platform:

sonar:

filterCweTop25: true

excludeMaintainabilityFindings: true

excludeReliabilityFindings: true

Veracode Integration¶

Requirements¶

Veracode integration requires:

- A Veracode Key ID and Key Secret

Configuration¶

For embedded cluster deployments, navigate to the admin console, Config tab and then to the Security Tool section.

Select the Veracode checkbox to enable Veracode integration.

Enter the following information in the configuration fields:

- Key ID: Your Veracode key ID

- Key Secret: Your Veracode key secret

For Helm deployments, add the following to your values.yaml:

platform:

veracode:

apiKeyId: "your-veracode-key-id"

apiKeySecret: "your-veracode-key-secret"

# Use existing secret instead of creating one

existingSecret: ""

secretKeys:

# -- The secret key containing the apiKeySecret

apiKeySecretKey: "apiKeySecret"

Checkmarx Integration¶

Checkmarx integration allows Pixee Enterprise Server to communicate with your existing Checkmarx One platform to analyze, react to, fix and update security vulnerabilities found in SAST scans.

Requirements¶

Checkmarx integration requires:

- A Checkmarx tenant account name. You can find this by going to your Checkmarx One platform and navigating to the

Settings>Identity and Access Managementsection. The tenant account name appears above the GUID that is your tenant ID. Be sure to use the account name, not the GUID. - API key with access to retrieve scan results and projects

- Knowledge of your Checkmarx region (US, US2, EU, EU2, DEU, ANZ, IND, SNG, or MEA)

Configuration¶

For embedded cluster deployments, navigate to the admin console, Config tab and then to the Security Tool section.

Select the Checkmarx checkbox to enable Checkmarx integration.

Enter the following information in the configuration fields:

- Region: Your Checkmarx region (defaults to US)

- Tenant Account Name: Your Checkmarx tenant account name

- API Key: Your Checkmarx API key

For Helm deployments, add the following to your values.yaml:

platform:

checkmarx:

region: "US" # Available regions: US, US2, EU, EU2, DEU, ANZ, IND, SNG, MEA

tenantAccountName: "your-checkmarx-tenant-account-name"

apiKey: "your-checkmarx-api-key"

Supported Regions¶

Checkmarx operates in multiple regions worldwide. The following regions are supported:

- US: Default US environment (

https://ast.checkmarx.net) - US2: Second US environment (

https://us.ast.checkmarx.net) - EU: European environment (

https://eu.ast.checkmarx.net) - EU2: Second European environment (

https://eu-2.ast.checkmarx.net) - DEU: Germany environment (

https://deu.ast.checkmarx.net) - ANZ: Australia & New Zealand environment (

https://anz.ast.checkmarx.net) - IND: India environment (

https://ind.ast.checkmarx.net) - SNG: Singapore environment (

https://sng.ast.checkmarx.net) - MEA: UAE/Middle East environment (

https://mea.ast.checkmarx.net)

Make sure to select the region that matches your Checkmarx AST tenant.

How It Works¶

The Checkmarx integration operates as follows:

- Project Discovery: Pixee Enterprise Server discovers Checkmarx projects associated with your repositories

- Scan Retrieval: The latest SAST scan results are fetched from the Checkmarx AST platform

- Vulnerability Analysis: SAST vulnerabilities are converted to SARIF format and analyzed by Pixee's security analysis engine

- Fix Generation: Pixee identifies applicable fixes for the discovered vulnerabilities

- Pull Request Creation: Automatic fixes are applied and submitted as pull requests to the repository

The integration uses Checkmarx's REST API to retrieve project information, scan results, and vulnerability details.

GitLab SAST Integration¶